We recently reviewed a codebase that was built almost entirely by AI. Not a prototype. Not a side project. A live, paying SaaS platform with real users, Stripe billing, and an enterprise feature set.

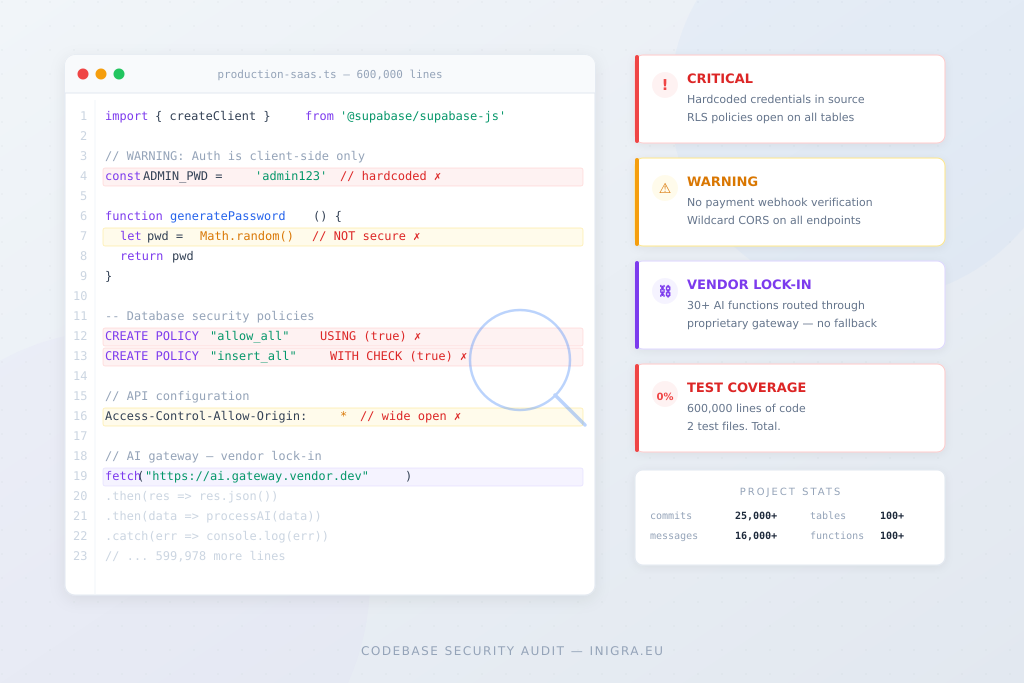

The numbers are staggering:

- Over 600,000 lines of TypeScript

- Over 25,000 GitHub commits

- Nearly 16,000 messages to the AI builder

- Over 8,000 AI-generated edits

- 100+ database tables

- 100+ serverless functions

- 500+ database migrations

- Built in under 6 months by a solo non-technical founder

By any measure, this is an impressive achievement. A single person with no coding background built a production SaaS platform that would have taken a traditional development team 12-18 months and six figures in budget. The UI is polished. The feature set is comprehensive. Users are signing up and paying.

But then we looked under the hood.

The platform dependency nobody talks about

The biggest issue wasn’t a bug. It wasn’t a missing feature. It was architecture.

The platform relies heavily on AI-powered features – they’re the core product, not a nice-to-have. Content generation, analysis, translation, recommendations – all powered by AI. In total, over 30 serverless functions handle AI operations.

Every single one of them routes through the no-code platform’s proprietary AI gateway.

Not OpenAI directly. Not Anthropic. Not any API the founder controls. A gateway URL owned and operated by the platform the app was built on.

This means:

- If the founder ever wants to leave the no-code platform, all 30+ AI features break instantly

- The founder has no control over which AI models are used, what rate limits apply, or what the per-request cost is

- The no-code platform can change pricing, throttle access, or shut down the gateway at any time

- There is no fallback. Zero direct API calls exist anywhere in the codebase

The founder built an entire business on top of infrastructure they don’t control and can’t replicate. The AI features ARE the product – without them, the platform is an empty shell. That’s not a technical limitation. That’s a business risk most founders don’t know they’re carrying.

Security: the patterns AI always leaves behind

We’ve now reviewed dozens of AI-generated codebases. The same patterns show up every single time. This project was no different.

Passwords generated with Math.random()

Two separate functions generate temporary passwords for new users using JavaScript’s Math.random(). This is not cryptographically secure – the output is predictable and can be reproduced. The fix is one line (crypto.getRandomValues()), but the AI chose the simpler option every time.

Row-level security that started wide open

The database had RLS enabled across the board with nearly 500 security policies – impressive numbers. But for the first two months of the project, critical tables had policies set to USING (true), meaning any authenticated user could read, modify, or delete any row in those tables. The AI eventually generated a security fix migration, but the platform was live with open policies for weeks before that happened.

Wildcard CORS on every endpoint

Every single serverless function uses Access-Control-Allow-Origin: *. This means any website on the internet can make requests to the platform’s API endpoints. For a SaaS handling user data and processing payments, this should be locked down to the platform’s own domain.

No payment webhook verification

The platform charges users via Stripe. It has a checkout flow and a customer portal. But there is no Stripe webhook handler to verify that payments actually completed. The credit system resets monthly on a scheduled cron job based on a date stored in the database – regardless of whether Stripe successfully collected the payment. If a card declines, the user could still receive their monthly credits.

The testing gap

The project has over 600,000 lines of code and 2 test files.

Two.

One tests accessibility. One tests a styling utility function. There are zero tests for:

- Authentication flows

- Payment processing

- Credit deduction logic

- Any of the 100+ serverless functions

- Database operations

- User permissions and role-based access

This isn’t unusual for AI-generated code. The AI builds features when you ask for features. It doesn’t write tests unless you specifically ask for tests. And most non-technical founders don’t know to ask.

Performance: death by fonts

The app loads 11 Google Font families on every single page. That’s roughly 500KB of font files before any content renders. Most pages use one or two of these fonts. The rest are loaded and never used.

This is a classic AI pattern – the founder asked for a font change at some point, the AI added it, and the previous font imports were never removed. Multiply this by hundreds of iterations and you get a bloated page load.

The 3,000-line function

One serverless function is over 3,000 lines long. Another exceeds 1,500 lines. A well-structured function is typically 50-200 lines. These are unmaintainable by any developer – human or AI.

The AI doesn’t refactor. It adds. When a function needs new capability, the AI appends code rather than restructuring. Over thousands of iterations, functions grow into monoliths that no one can safely modify without breaking something else.

What the AI got right

It would be dishonest to only talk about what went wrong. The AI built genuinely impressive things:

- A complete multi-role authentication system with session management and audit logging

- A sophisticated credit-based billing model with organisation-level pooling

- Comprehensive RBAC (role-based access control) with granular permissions

- A security fix migration that identified and replaced overly permissive RLS policies

- Clean component architecture with proper separation of concerns

- Over 120 custom React hooks for data fetching and state management

- Full internationalisation support

The founder built a platform that competes with established products that took years and millions to develop. That’s real. The AI made it possible.

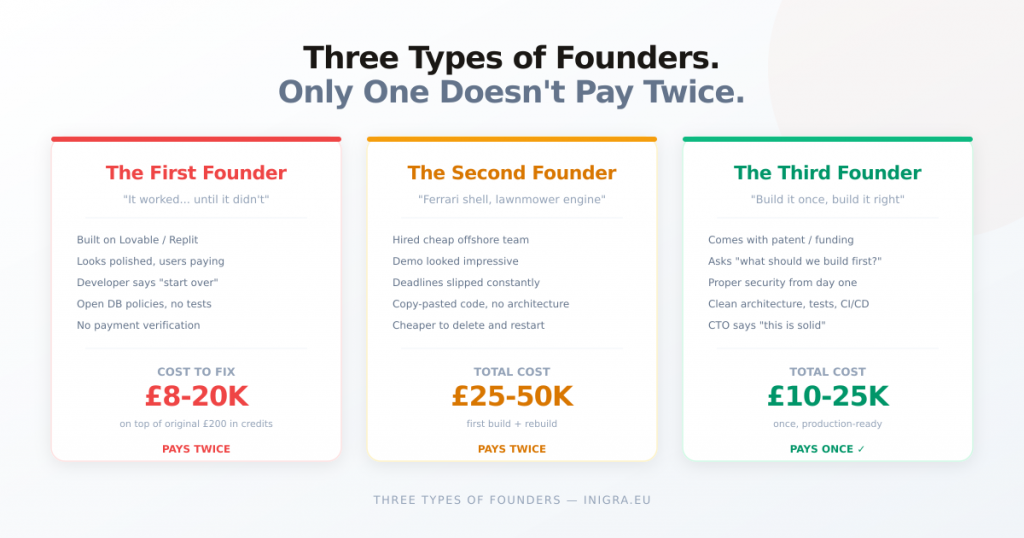

The uncomfortable truth

The problem isn’t that AI-generated code is bad. Much of it is good. The problem is that AI-generated code is consistently good at the things that are visible (UI, features, user flows) and consistently weak at the things that are invisible (security, performance, testing, architecture decisions, vendor dependencies).

A founder looking at their working app sees a product ready to scale. A developer looking at the same codebase sees a list of things that will break under pressure.

The 600,000 lines work today. The question is whether they’ll work when:

- A security researcher finds the wildcard CORS

- A card payment fails and credits still reset

- The no-code platform changes its AI gateway pricing

- A user discovers they can access another user’s data through a stale RLS policy

- The 3,000-line function needs a bug fix and changing one line breaks three features

None of these are hypothetical. We’ve seen every one of them happen in production.

What to do about it

If you’ve built your product on a no-code platform with AI, here’s what we’d recommend:

Right now (free, takes an hour):

- Connect your project to GitHub if you haven’t already

- Search your codebase for

Math.randomin any security context – replace withcrypto.getRandomValues() - Check your database policies – search for

USING (true)and understand which tables are wide open - Check if your serverless functions use wildcard CORS – lock it down to your domain

Before you scale:

- Get an independent security review. Not from the AI that wrote the code – from a human who understands what to look for

- Verify your payment flow end-to-end. Does a failed payment actually prevent access?

- Identify your platform dependencies. Can you leave your current provider without losing core features?

- Add tests for your critical paths: authentication, payments, and any function that touches user data

Before you raise funding or sell:

- Any technical due diligence will find these issues. Better to fix them before investors or buyers look under the hood

- A codebase audit typically costs a fraction of what the findings would cost you if discovered in production

The AI got you to market. That’s the hard part. Now it’s time to make sure what you built can survive contact with the real world.

We audit AI-generated codebases every week. If you want to know what’s in yours before your users find out, we offer free security reviews for projects built on Lovable, Replit, Base44, and Bolt. Details at inigra.eu/nocodemigration